Streamlets preview

- Relational pipes

- Principles

- Roadmap

- FAQ

- Specification

- Implementation

- Examples

- License

- Screenshots

- Download

- Support & contact

This is an early preview published at 2020-01-17 before the v0.15 release.

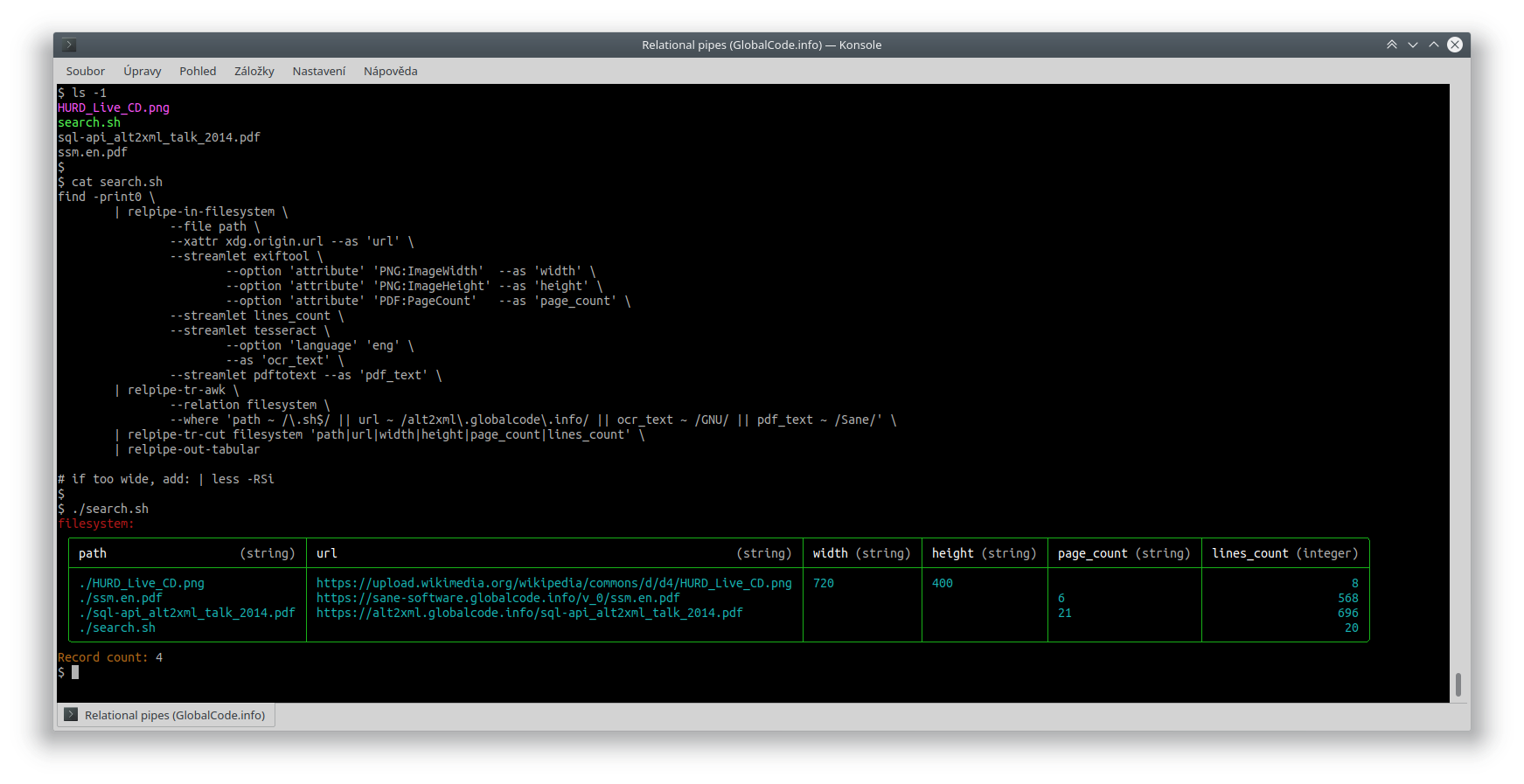

First prepare some files:

$ wget --xattr https://upload.wikimedia.org/wikipedia/commons/d/d4/HURD_Live_CD.png

$ wget --xattr https://sane-software.globalcode.info/v_0/ssm.en.pdf

$ wget --xattr https://alt2xml.globalcode.info/sql-api_alt2xml_talk_2014.pdf

$ ls -1

HURD_Live_CD.png

search.sh

sql-api_alt2xml_talk_2014.pdf

ssm.en.pdf

Collect metadata (file path, extended attributes, image size, number of PDF pages, number of text lines, OCR recognized text extracted from images and plain-text extracted from PDF files), filter the results (do restriction), select only certain attributes (do projection) and format result as a table:

find -print0 \

| relpipe-in-filesystem \

--file path \

--xattr xdg.origin.url --as 'url' \

--streamlet exiftool \

--option 'attribute' 'PNG:ImageWidth' --as 'width' \

--option 'attribute' 'PNG:ImageHeight' --as 'height' \

--option 'attribute' 'PDF:PageCount' --as 'page_count' \

--streamlet lines_count \

--streamlet tesseract \

--option 'language' 'eng' \

--as 'ocr_text' \

--streamlet pdftotext --as 'pdf_text' \

| relpipe-tr-awk \

--relation 'filesystem' \

--where 'path ~ /\.sh$/ || url ~ /alt2xml\.globalcode\.info/ || ocr_text ~ /GNU/ || pdf_text ~ /Sane/' \

| relpipe-tr-cut --relation 'filesystem' --attribute 'path|url|width|height|page_count|lines_count' \

| relpipe-out-tabular

# if too wide, add: | less -RSi

Which will print:

filesystem:

╭─────────────────────────────────┬──────────────────────────────────────────────────────────────────────┬────────────────┬─────────────────┬─────────────────────┬───────────────────────╮

│ path (string) │ url (string) │ width (string) │ height (string) │ page_count (string) │ lines_count (integer) │

├─────────────────────────────────┼──────────────────────────────────────────────────────────────────────┼────────────────┼─────────────────┼─────────────────────┼───────────────────────┤

│ ./HURD_Live_CD.png │ https://upload.wikimedia.org/wikipedia/commons/d/d4/HURD_Live_CD.png │ 720 │ 400 │ │ 8 │

│ ./ssm.en.pdf │ https://sane-software.globalcode.info/v_0/ssm.en.pdf │ │ │ 6 │ 568 │

│ ./sql-api_alt2xml_talk_2014.pdf │ https://alt2xml.globalcode.info/sql-api_alt2xml_talk_2014.pdf │ │ │ 21 │ 696 │

│ ./search.sh │ │ │ │ │ 21 │

╰─────────────────────────────────┴──────────────────────────────────────────────────────────────────────┴────────────────┴─────────────────┴─────────────────────┴───────────────────────╯

Record count: 4

How it looks in the terminal:

OCR and PDF text extractions (and also other metadata extractions) are done on-the-fly in the pipeline.

Especially the OCR may take some time, so it is usually better in such case to break the pipe in the middle,

redirect intermediate result to a file (serves like an index or cache) and then use it multiple times

(just cat the file and continue the original pipeline; BTW: multiple files can be simply concatenated, the format is designed for such use).

But in most cases, it is not necessary and we work with live data.

Please note that this is really fresh, it has not been released and can be seen only in the Mercurial repository. The streamlets used can be seen here: streamlet-examples. And even the upcoming release v0.15 is still a development version (it will work, but the API might change in future – until we release v1.0 which will be stable and production ready).

Regarding performance: currently it is parallelized only over attributes (each streamlet instance runs in a separate process). In v0.15 it will be parallelized also over records (files in this case).

Relational pipes, open standard and free software (C) 2018-2025 GlobalCode